February 5, 2026

Anthropic, S&P 500, AI

By Mark Lacey

Software Engineering’s New Job: Write Specs, Not Code

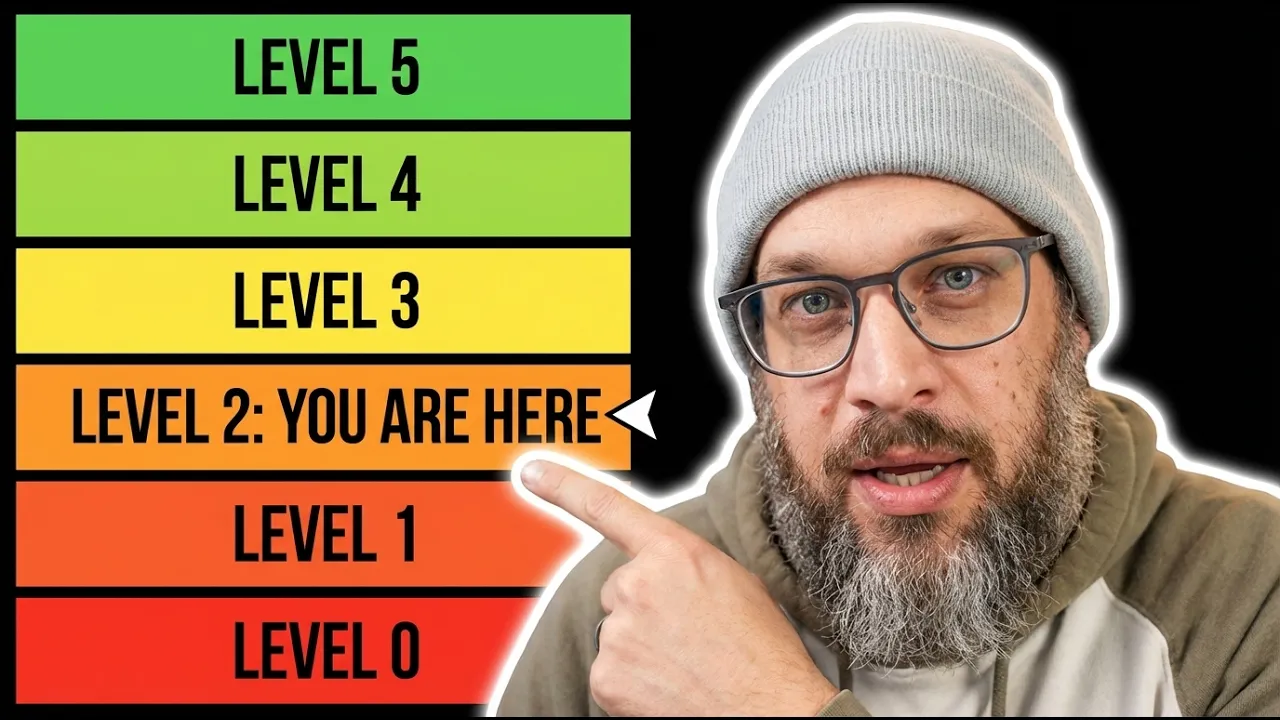

The state of AI coding is changing fast. A new framework—five levels of AI (“vibe”) coding—shows how the competitive playing field is shifting from implementation speed to specification quality. In other words, software engineers are increasingly becoming product thinkers, where human judgment—clarifying intent, defining success, and evaluating outcomes—becomes the crucial skill for keeping pace with the accelerating AI frontier.

PM2NET takes a different approach with our Human First AI Governance. We believe validation, testing, and risk mitigation are more important than speed—especially at this early stage. We also believe it’s critical to understand the scope of the current acceleration of AI agents, and particularly where AI is beginning to replace parts of what engineers traditionally do.

Icarus wasn’t punished for curiosity, but for unbounded control

PM2NET Human First governance puts human checkpoints at the start—where provenance belongs in the stack, not bolted on later. This starts with humans in the loop at crucial stops. What worries us is the speed at which AI is redefining the engineer. As this video breathlessly points out, many teams are ignoring the crucial step of comprehensive specs. Too much of the industry has pulled the guardrails off without understanding the nuance of AI—especially the material differences between models and versions.

What we suspect will happen in the software world may mirror what we’re already seeing elsewhere: institutions rushing AI toward high-stakes frontlines. That same recklessness is already confronting cybersecurity—and it moves us closer to a kind of “armageddon” scenario if governance doesn’t keep up.

We’ve spent decades calling software delivery a “process.” In reality, it’s a labyrinth—one we keep expanding, then act surprised when we get lost inside. Daedalus built a maze for a king who wanted certainty. The irony? The builder became the captive.

That’s your delivery lifecycle: a maze of rituals, tickets, approvals, and reviews—staffed by smart people and called “velocity.” Yet every quarter, defects slip through, audits sting, and incidents repeat.

Now we’re adding LLMs to that maze—without discipline. We give the labyrinth wings, but wings made of wax. In the myth, Icarus wasn’t punished for curiosity, but for unbounded control: no governor, no safety lattice—just altitude and ego until physics collects the debt.

That’s where most “AI for engineering” is headed: flashy demos, brittle outcomes, and failures that only surprise those who never wrote the rules down in a way a machine can’t ignore.

Why this matters now (and why governance can’t lag)

The episode I’m featuring from AI News & Strategy Daily | Nate B. Jones is useful precisely because it doesn’t treat AI coding as a marginal productivity trick—it frames it as a structural change in how software is produced. The “five levels of vibe coding,” culminating in the dark factory, makes one thing unavoidable: the industry is racing toward higher autonomy at a pace that most enterprise controls were never designed to handle.

That acceleration is exactly why Human First AI Governance matters more now than ever. Not because speed is inherently bad—but because speed without explicit intent, validation, and bounded autonomy turns small mistakes into systemic ones.

The real security shift: from “bad code” to “unchecked actions”

As engineering workflows become more agentic, the dominant risk isn’t simply “the model writes buggy code.” The bigger shift is that AI systems increasingly take actions across tools and environments—reading repos, generating changes, opening PRs, modifying infrastructure, querying internal docs, and sometimes executing steps automatically.

This changes the security question from:

“Did we ship a defect?”

to:

“Did we allow a semi-autonomous actor to do something we didn’t explicitly constrain, observe, and verify?”

Where things go wrong (in practical terms)

Without turning this into doomsday language, here are the concrete failure modes that become more likely as teams move up Nate’s ladder toward Level 4–5:

Prompt injection becomes operational, not theoretical.

When an agent can browse, summarize, or ingest external/internal content and then act, malicious instructions can hitch a ride inside normal workflows—silently redirecting behavior.

Indirect prompt injection (“poisoning the well”).

Attackers can embed hidden instructions on webpages, docs, or issue content. When an agent reads it, the agent may follow the attacker’s intent rather than the user’s.

Attribution and detection stay weak.

Many agent-driven compromises won’t look like a traditional “breach.” They look like normal activity—just the wrong outcomes. That makes detection and incident response harder.

Tool access expands blast radius.

The more integrated the agent is with build systems, CI/CD, secrets, ticketing, cloud consoles, and code owners, the more a single compromised workflow can cascade.

Social + identity attacks scale.

AI-assisted fraud (including fake candidate pipelines and insider-style access attempts) becomes cheaper and more convincing when the industry assumes automation is the default.

What the video makes clear: specs are the control surface

A key point in Nate’s framework is that the bottleneck is moving from implementation speed to specification quality. That’s not just a productivity insight—it’s a governance insight.

If the “engineer” role shifts toward writing specs and evaluating outcomes (Level 4), then the spec becomes the policy, and validation becomes the guardrail. And if a “dark factory” (Level 5) ships from specs with no human intervention, then governance can’t be something you bolt on after the fact. It has to be designed into the workflow from the start.

Human First AI Governance (firm, not fearful)

Our stance at PM2NET isn’t anti-AI. It’s pro-accountability.

Human First AI Governance means:

Humans define intent and success criteria up front (clear specs, bounded scope).

Humans set explicit checkpoints where autonomy must pause for review.

Testing and validation expand beyond unit tests into behavioral scenarios (the direction the video highlights).

Risk mitigation is treated as a first-class engineering deliverable—not paperwork after a deployment.

Bottom line

The “dark factory” idea is a useful north star for understanding where the frontier is going. But for most enterprises, the right move isn’t to chase Level 5 autonomy—it’s to build the governed path that makes Levels 2–4 safe, measurable, and resilient.

This video is evidence that the pace of change is real. Our conclusion is simple: when the tempo increases, governance must become more intentional—not less.